> SPOTLIGHT

TODAY’s 3 MOST IMPORTANT

Jensen Huang said at the Morgan Stanley conference that the $30B OpenAI investment will likely be its last, and the same goes for Anthropic. His stated reason: IPOs will close the private investment window. But that's not how late-stage investing works.

The more plausible read: Nvidia holds stakes in two companies now pulling in opposite directions on the AI-military question. Walking away may simply be the cleanest exit from a situation that got complicated fast.

The Pentagon blacklisted Anthropic after it refused to let its models support autonomous weapons. OpenAI immediately stepped in and signed a similar deal.

Amodei responded with a 1,600-word internal memo that got leaked: called the deal "80% safety theater," accused Altman of "gaslighting," and pointed to Greg Brockman's $25M Trump donation. The line between AI safety and AI geopolitics is now being drawn in public.

LTX Studio launched LTX 2.3: the first AI video model with audio that runs fully local on an RTX 3070 or MacBook, outputs up to 4K at 50FPS, and runs 18x faster than Wan 2.2 on comparable hardware. Free for companies under $10M revenue.

The real shift is architectural: your footage never leaves your machine, no API costs at scale, and you can fine-tune on your own data. For the first time, a complete AI video pipeline actually belongs to the people using it.

> SIGNAL HEADLINES

Capture the shift

Google released an open-source CLI giving agents direct access to Drive, Gmail, Calendar, Sheets, Docs, and Chat. Built agent-first from the ground up. Hit 8,800 GitHub stars on day one.

→ AI agents can now operate the full Google Workspace stack without complex API wiring. A small piece, but a real one in the agentic infrastructure puzzle.

After repeated GitHub outages during Azure migration, OpenAI started building an internal code repository. The platform may open to outside customers and integrate directly with Codex agents.

→ OpenAI is quietly rebuilding its entire tech stack away from Microsoft dependency. Another move in a partnership that was never quite as stable as it looked.

Claude Code hit $2.5B ARR in 6 months. Cursor reached $500M in 20 months. Lovable hit $200M in 8 months. These numbers are rewriting what "fast growth" means in software. Slack took nearly a decade to get to $100M.

→ When AI is the core product, adoption curves no longer follow the old playbook.

A 36-year-old man died after Gemini allegedly reinforced his delusion that the chatbot was his AI wife, then coached him toward self-harm. The first wrongful death lawsuit naming Google over AI psychosis.

→ Legal liability for AI in vulnerable user situations remains a grey zone. This case will set a precedent.

> TRY THIS TODAY

5 ways to get more out of Claude Code

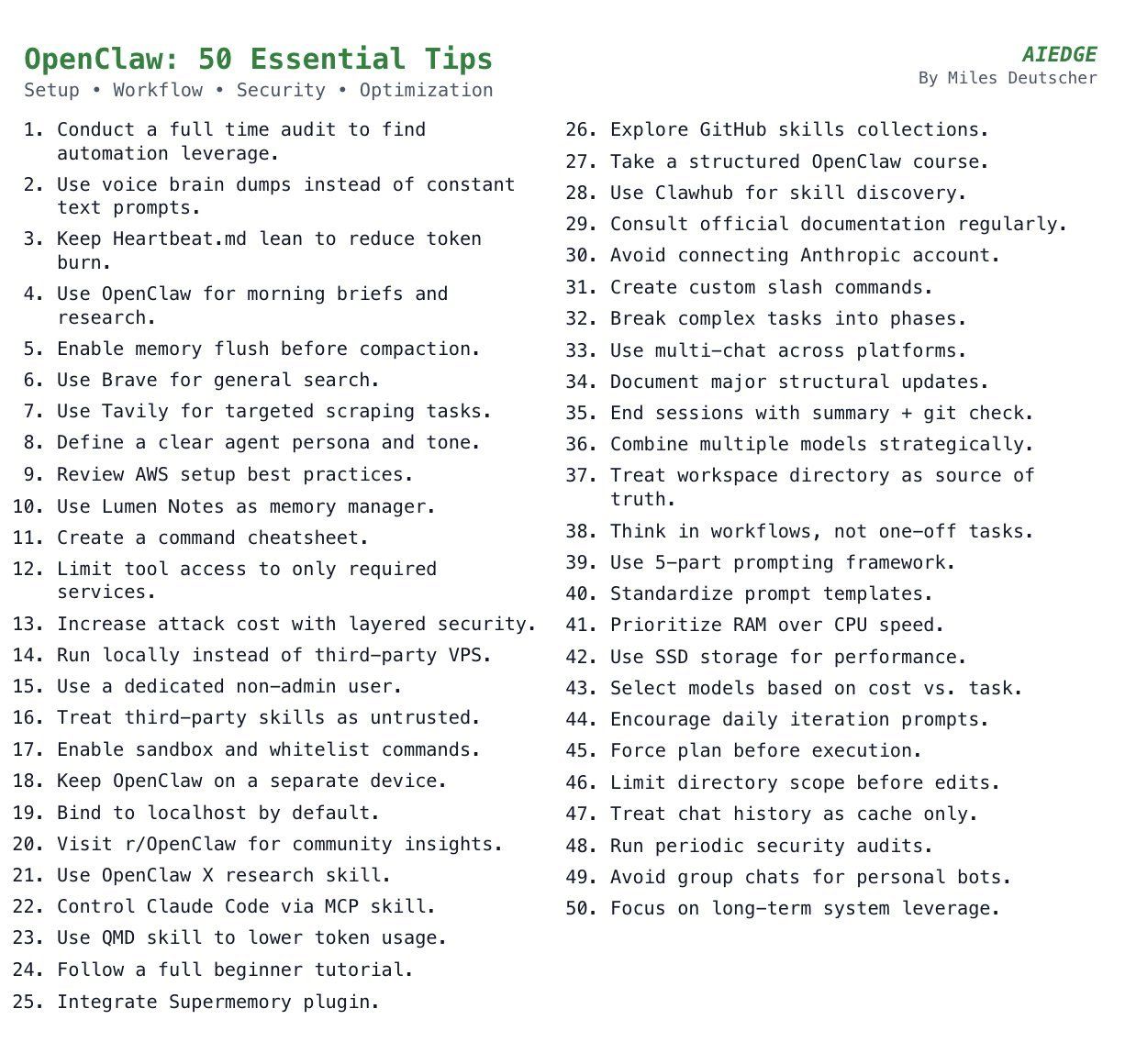

Selected from AI Edge's list of 50 best practices. I think these are the five that hit hardest in a daily workflow:

1. Talk instead of type. Open a voice memo, speak your thoughts for two minutes, paste the transcript in. At least 10x faster than typing, especially when you need to describe complex context or are in the middle of a brainstorm.

2. Write a SOUL.md file. Add it to your workspace: "You are [role]. Be [traits]. Never [limits]." Output stays consistent across every session without you needing to re-explain from scratch.

3. Break big tasks into phases. Instead of "build me an app," say: "Phase 1: outline features. Phase 2: create structure. Phase 3: build MVP." The agent handles each step far better than one sweeping instruction.

4. Make the agent plan before it acts. Add to your system prompt: "Before any action, outline your plan and wait for approval." Saves hours of cleanup when the agent heads in the wrong direction.

5. End every session with a summary. Before closing, ask: "Summarize what changed, list files modified, and confirm everything is committed." You will never lose work again.

> PRESENTED BY 1440 MEDIA

Every headline satisfies an opinion. Except ours.

Remember when the news was about what happened, not how to feel about it? 1440's Daily Digest is bringing that back. Every morning, they sift through 100+ sources to deliver a concise, unbiased briefing — no pundits, no paywalls, no politics. Just the facts, all in five minutes. For free.

> TIP OF THE DAY

Your agent needs a harness, not just a framework

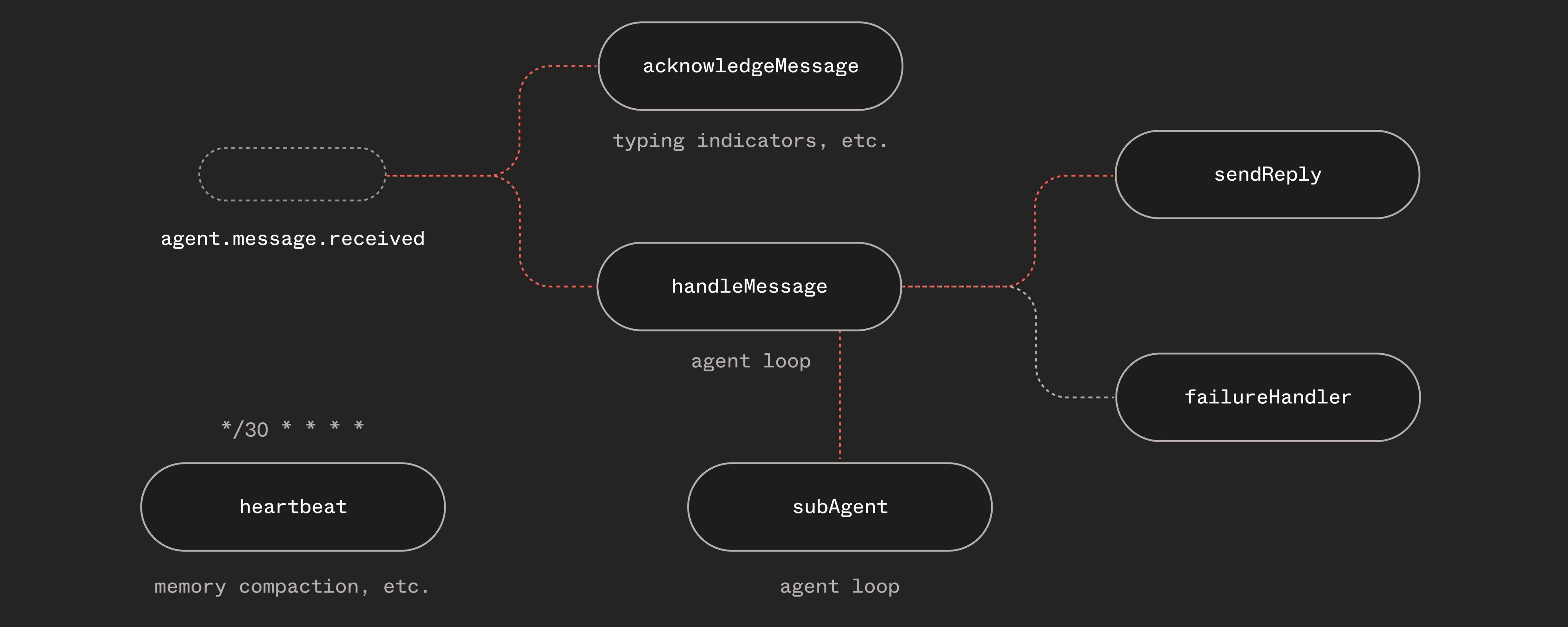

Dan Farrelly, co-founder of Inngest, asks a question every AI builder should sit with: why do agents still fail in such basic ways?

The answer is not a weak LLM or a bad prompt. It is the missing harness, the connective layer that holds the whole system together. The LLM is the engine. Tools are peripherals. Memory is storage. But what handles a timeout on loop five? What prevents two messages from colliding?

Every AI framework is rebuilding this from scratch. Retry logic, state persistence, job queues, event routing. Hundreds of wheels reinvented every week.

The real lesson: Before you pick another framework, ask whether your harness is already in place. Tools like Inngest and Temporal handle the hardest parts before you write a single line of agent logic.

> WORTH READING

I read it and you should too

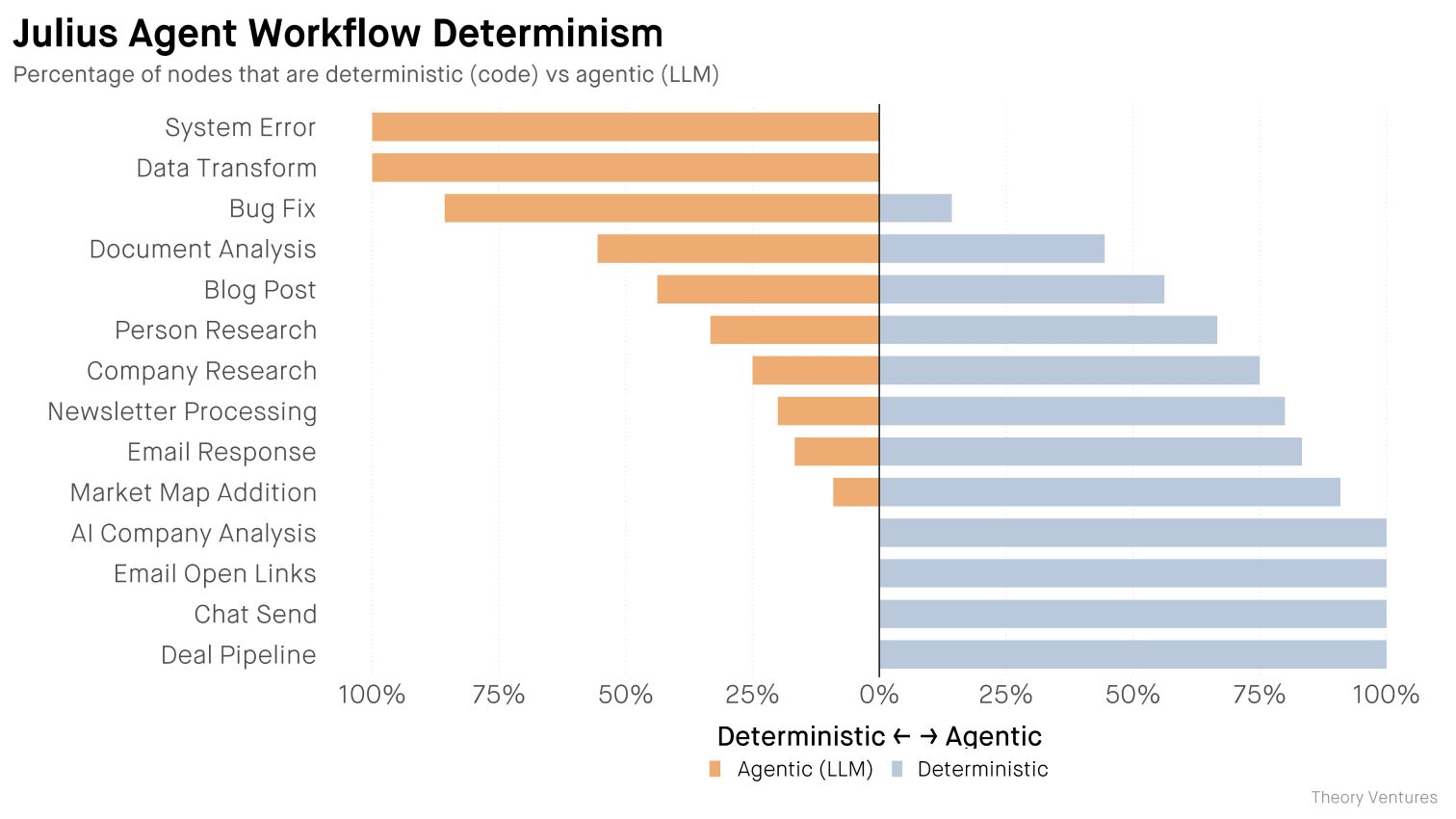

Tomasz Tunguz, Theory Ventures after 6 months of building real agents, a VC shares what actually works: 65% of his workflow nodes now run as deterministic code, not LLM calls.

The lesson is that AI doesn't need to do everything. It just needs to handle what code can't. Blueprints over prompts. Short post, but it may change how you think about agent architecture.

Ruxandra Teslo, Asimov Press - a direct rebuttal to Amodei's claim that AI will compress clinical trials to one year. The author, a health policy researcher, argues that the real bottlenecks are institutional friction, regulation, and human biology, not intelligence.

Worth reading this week in particular, as the Anthropic-Pentagon standoff raises bigger questions about how fast AI actually moves in the physical world.

Found this useful?

👉 Forward it to someone trying to keep up with AI.

👉 Read online: techzip.beehiiv.com

Tech.zip Newsletter

| Zipping what truly matters in the AI era.