ABOVE THE NOISE

AI didn't take the weekend off.

OpenAI leaked its first-ever hardware blueprint with Jony Ive, Google publicly called time of death on two popular startup models, and a viral essay painted a terrifying scenario where AI succeeds so well it tanks the global economy.

New week, the race is shifting from models to products, from prompts to context, from hype to survival.

THE BRIEF

What happened?

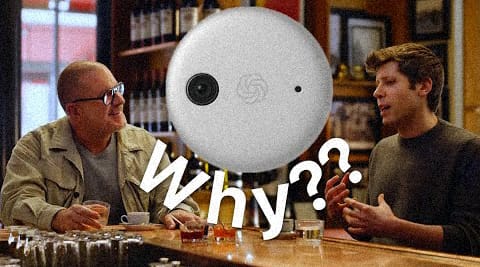

According to The Information, the first hardware product from the OpenAI–Jony Ive collaboration (Ive is Apple's former chief design officer) will be a $200–$300 smart speaker with a built-in camera and facial recognition for suggesting actions and making purchases. A 200+ person team is developing it, targeting an early 2027 launch. AI-powered smart glasses are also in the pipeline but won't hit production before 2028.

Why it matters?

This is OpenAI's first step into hardware - directly competing with Amazon (Alexa+), Apple (Siri + HomePod), and Google (Nest). The key detail: this device won't just listen for commands. It will "observe" its surroundings and proactively nudge users toward actions. AI is shifting from "wait for you to ask" to "act on your behalf" - right inside your physical space.

LEFT CURVE TAKE:

A smart speaker sounds "old," but the strategy behind it isn't.

OpenAI is betting on AI-first hardware - devices designed around AI rather than AI bolted onto existing products. If Apple needed 3 years to make Siri barely functional, OpenAI might only need 1, but the real question is whether consumers are ready to let an AI camera "read" their living room.

LEFT CURVE PICKS

Google's VP of global startups, Darren Mowry, said it plainly: startups that simply wrap LLMs with a thin UI layer (LLM wrappers) and platforms that aggregate multiple models into one interface (AI aggregators) have their "check engine light" on. The era of slapping a UI on GPT and raising a round is over.

→ If your AI startup doesn't have a real moat, 2026 is the year to build one or exit the game.

Dharmesh Shah (HubSpot co-founder) dedicated this week's simple.ai newsletter to Context Engineering - a concept championed by Shopify CEO Tobi Lütke and Andrej Karpathy. The core idea: instead of optimizing how you ask AI, optimize what the AI has access to when it thinks. With context windows now reaching 1 million tokens, the game has fundamentally changed.

→ Prompt engineering taught us to talk to AI. Context engineering teaches us to think with AI.

Citrini Research published a provocative thought exercise: if AI keeps delivering on expectations, it could create "Ghost GDP" - output that shows up in national accounts but never circulates through the real economy. Machines replace workers, displaced workers spend less, companies invest more in AI, repeat. In this scenario, the S&P 500 drops 38% from its peak, unemployment hits 10.2%, and the consumer economy withers.

→ This isn't a prediction, but it's the question few dare to ask: if AI wins, who loses?

TRY THIS TODAY

In Anthropic's latest video "Building Effective Agents”, engineers Erik and Barry share hard-won lessons from working with enterprise customers.

Here are the 3 most important takeaways:

1. You don't always need an agent. If you know the number of steps in advance → that's a workflow (a fixed chain of prompts). Agents are only needed when you don't know how many steps it'll take - e.g., coding, iterative search, or customer support.

2. Tool descriptions matter as much as your prompt. Many developers craft beautiful prompts but leave tool descriptions bare-bones, with parameters named "A" and "B." Remember: everything lives in the same context window - bad tool descriptions = bad agent performance.

3. Consumer agents are overhyped right now. According to Erik (Anthropic Research): having an agent book your entire vacation sounds great, but fully describing your preferences to the agent is almost as much work as booking it yourself. Agents work best on verifiable tasks (coding, search) not complex personal ones. Not yet.

Apply today: If you're building an AI product, ask yourself: "If the model gets smarter, does my product get better?" If the answer is "our moat disappears"- you're building the wrong thing.

LEFT CURVE SIGNAL

AI's context window keeps getting bigger, but yours doesn't.

The most important skill of 2026 isn't knowing more. It's knowing what's worth putting in.

Found this useful?

👉 Forward it to someone trying to keep up with AI.

Read online: leftcurve.beehiiv.com