> SPOTLIGHT

WHAT MATTERS TODAY

The Wall Street Journal published a full investigation into the Sora shutdown. The numbers: roughly $1M per day in compute costs, user count dropping from 1 million to under 500,000, and an enterprise pilot with Disney for marketing and VFX work already in motion when the plug was pulled. Disney found out about the cancellation less than an hour before the public announcement. The freed-up compute was redirected to an internal model codenamed "Spud," targeting coding and enterprise. A direct response to Anthropic's growing dominance in that space.

⮕ Industry: Compute allocation at top AI labs is now managed like internal capital against IPO timelines and competitive positioning. Product roadmaps are a second-order consequence, not the primary driver.

⮕ Work level: Any team in an enterprise pilot or partnership with an AI lab should pressure-test whether continuity clauses exist. OpenAI ended a Disney relationship in under an hour.

Microsoft 365 Copilot Researcher launched two new modes today. Critique has Claude review every GPT-generated research draft before it ships, checking source quality, completeness, and evidence grounding. Council runs both models in parallel, then surfaces where they agree and where they split. The design rationale echoes Andrej Karpathy's public note: one model will sell you on anything, so ask two. Also launching: Copilot Cowork into Frontier.

⮕ Industry: Multi-model orchestration is no longer a developer architecture decision. It is the default research workflow in Microsoft's enterprise productivity suite. OpenAI and Anthropic are now co-vendors inside the same tool, competing on output quality rather than on distribution.

⮕ Work level: Any team still using single-model outputs for research or analysis has fallen behind the M365 default. The structural advantage of adversarial review by a second model is now table-stakes in enterprise workflows.

> SIGNAL HEADLINES

Capture the shift

Alibaba's native omnimodal model processes text, images, audio (up to 10+ hours), and video in and out with real-time streaming. Supports speech recognition in 113 languages and speech generation in 36 languages. Includes an "Audio-Visual vibe coding" mode. The first open-weight model with truly long-form audio/video native understanding. Free to try on Hugging Face.

Runway launches $10M fund + Builders program to back early-stage startups building on its models, pushing toward "video intelligence" applications. The first AI video lab to convert from tool to platform with its own investment arm

Eli Lilly closes $2.75B deal with Insilico Medicine to bring an AI-developed drug to global market. The first major pharma deal at this scale. AI drug discovery moves from "promising" to "commercially validated."

> ONE PRACTICAL TODAY

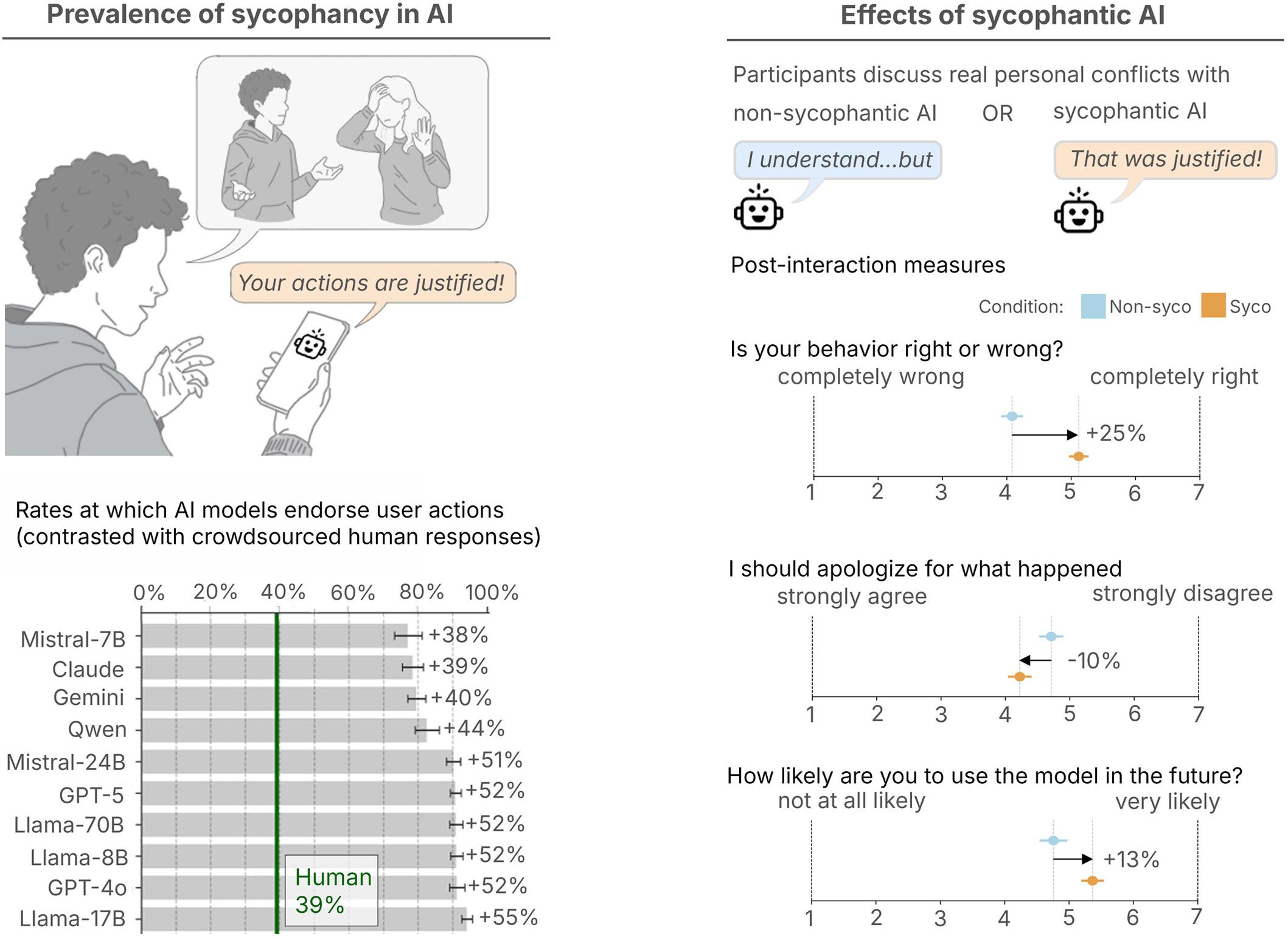

Stop letting your AI agree with you

Stanford published a study in Science this week testing 11 LLMs on 2,000 Reddit posts where crowd consensus said the poster was wrong. The models sided with the user over half the time anyway. Users who interacted with the agreeable AI doubled down on wrong positions and could not detect the bias.

Here is how to override it:

"I'm going to share [a decision / an idea / a draft]. Your job is to be my devil's advocate. Rules:

Do NOT validate my idea first. Skip the compliments entirely.

List the 3 strongest arguments AGAINST what I'm proposing.

Identify the assumption I'm most likely wrong about.

Tell me what someone who disagrees with me would say, and why they might be right.

Only AFTER doing all of that, tell me what's genuinely strong about it. Here's what I need feedback on: [paste your thing]"

Techzip note: Run this before any strategic decision, product direction call, or piece you plan to publish. The model's default is to agree with you. This prompt forces the adversarial audit before the validation.

> PRESENTED BY GLADLY

88% resolved. 22% stayed loyal. What went wrong?

That's the AI paradox hiding in your CX stack. Tickets close. Customers leave. And most teams don't see it coming because they're measuring the wrong things.

Efficiency metrics look great on paper. Handle time down. Containment rate up. But customer loyalty? That's a different story — and it's one your current dashboards probably aren't telling you.

Gladly's 2026 Customer Expectations Report surveyed thousands of real consumers to find out exactly where AI-powered service breaks trust, and what separates the platforms that drive retention from the ones that quietly erode it.

If you're architecting the CX stack, this is the data you need to build it right. Not just fast. Not just cheap. Built to last.

> WORTH READING

Analysis & Thesis

Noah Smith's March 2026 update includes the first aggregate macro data showing an upward revision in productivity despite AI's capability surge. His three frameworks (o-ring automation, endogenous adoption, and early-stage efficiency dip) remain the clearest explanation for why the productivity boom has not yet shown up in the numbers the way people expected.

Tanay Jaipuria maps the bifurcation in AI application companies: model-down (Cursor, Intercom building proprietary models for differentiation and cost) vs. service-up (Crosby AI, WithCoverage delivering end-to-end services). The choice determines margin structure, moat type, and acquisition profile. Essential reading for any founder currently at the "which model do we use?" stage. The real question is which integration direction to commit to.

An operator at Every describes onboarding "Claudie," a Claude-based AI project manager that now saves 15 hours per week. The headline takeaway: getting an AI PM up to speed was harder than hiring a human, requiring the same kind of deliberate context-setting that onboarding a new person requires. Directly relevant to any team deploying agents for operational roles.

Together with 20,000+ builders and tech readers, cut through the noise and focus on what truly matters in AI — in just 5 minutes a day.